- AI Agents

- Data Centers

- Semiconductors

- TPUs

Google's New TPU Chips Bet Big on the Agentic AI Era

11 minute read

Google unveils twin eighth-generation TPUs at Cloud Next 2026, purpose-built for training and autonomous AI agents, as Alphabet commits up to $185 billion in infrastructure spending this year.

Key Takeaways

- Google has split its TPU line into two specialised eighth-generation chips: the 8t for large-scale training and the 8i for agentic inference, signalling a fundamental shift in how AI hardware is architected.

- Performance gains of up to 2.7x better cost efficiency on training and 80% improvement on low-latency inference position Google to compress the cost curve as enterprise agentic workloads scale rapidly beyond chatbots.

- Alphabet’s $175–185 billion capex guidance for 2026, alongside a $240 billion Google Cloud backlog, reflects a conviction that custom silicon will be the decisive moat in the race to profit from autonomous AI deployment.

The Fork in the Road

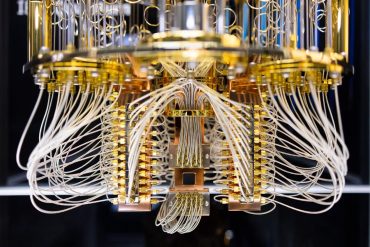

For a decade, Google’s Tensor Processing Units represented a quiet competitive advantage: custom silicon designed internally, tuned relentlessly, and deployed at a scale that few rivals could match. The first TPUs appeared in 2016, largely invisible to the outside world. By the time the sixth generation, Trillium, reached general availability in December 2024, the accumulated engineering lead had become a structural asset. Trillium delivered 4.7 times the peak compute of its predecessor and improved energy efficiency by 67 percent. Ironwood, the seventh generation, followed in November 2025 with more than four times the per-chip performance and a superpod configuration scaling to 9,216 chips.

What Google announced at Cloud Next in Las Vegas on April 22, 2026, was different in character. Rather than a single successor chip, the company introduced two: the TPU 8t, engineered for pre-training and embedding-heavy workloads, and the TPU 8i, built for post-training, sampling, serving, and the iterative reasoning that autonomous AI systems require. The bifurcation is not a product-line flourish. It is an architectural acknowledgement that the demands of training a frontier model and running an autonomous agent at scale are sufficiently distinct to warrant separate hardware.

Why Agentic AI Changes the Hardware Problem

The shift toward agentic AI systems, those capable of multi-turn reasoning, tool use, long-context processing, and sequential decision-making, has exposed bottlenecks that general-purpose accelerators handle poorly. Training workloads are relatively predictable: large matrix operations, regular data flows, and well-understood parallelism. Inference for autonomous agents is not. Key-value caches grow large and irregular. Sequences extend far beyond the context windows that earlier inference hardware was designed around. Collective communications between chips, required for coordinated reasoning across distributed systems, introduce latency that compounds at every step of a reasoning chain.

The TPU 8i addresses these constraints with deliberate specificity. Its hierarchical Boardfly topology reduces network diameter to seven hops, roughly half that of a conventional torus architecture, while a new Collectives Acceleration Engine cuts collective-operation latency by a factor of five. With 288 GB of high-bandwidth memory, 384 MB of on-chip SRAM (three times the prior generation), and 8,601 GB/s of memory bandwidth, it is configured for precisely the workloads that will define enterprise AI over the next several years: extended reasoning loops, retrieval-augmented generation, and multi-agent orchestration.

The TPU 8t, meanwhile, retains a 3D torus topology scalable to 9,600 chips per superpod and adds a third-generation SparseCore for embedding lookups, native FP4 support that doubles matrix-unit throughput, and a Vector Processing Unit that overlaps quantization and normalization with matrix operations. Its 216 GB of high-bandwidth memory and Virgo Network fabric delivering up to 47 petabits per second position it for the sustained, high-throughput demands of frontier model training.

The Economics of Autonomy

Performance metrics matter less as standalone achievements than as signals of economic intent. Google reports up to 2.7 times better performance-per-dollar for large-scale training on the 8t and an 80 percent improvement in performance-per-dollar for low-latency inference on the 8i, alongside a doubling of performance-per-watt across both chips. These are not incremental efficiency gains. They are structural cost improvements at a moment when inference already constitutes the dominant expenditure in deployed AI, and when agentic workloads, with their extended chains of thought and tool invocations, are poised to amplify that cost further.

The competitive logic is straightforward. As enterprise customers move from chatbot deployments toward autonomous business processes, the unit economics of inference will increasingly determine which infrastructure providers they choose and at what margin. Google’s move to specialise hardware across the full AI lifecycle, from the first token of pre-training to the final output of a multi-agent reasoning loop, is a bid to own the cost structure of the agentic era before rivals can establish their own.

Software, Scale, and the Platform Play

Hardware in isolation rarely wins. The TPUs derive their commercial relevance from the software stack surrounding them. Google’s JAX and Pathways frameworks, refined over years of internal deployment, scale seamlessly to the new silicon. Native PyTorch support, currently in preview, lowers the adoption barrier for the broader developer community that has built its workflows on external frameworks.

At Cloud Next, Google also highlighted the Gemini Enterprise Agent Platform, designed to allow organisations to discover, govern, and scale AI agents within auditable, secure environments. Agentic Data Cloud and Workspace Intelligence extend the same architecture, giving agents persistent context across enterprise data and collaborative tools. Gemini 2.0, launched in December 2024, was positioned explicitly as a model for the agentic era; its successors are co-designed with the new silicon in mind. The hardware and software moves are tightly coupled, and that coupling is itself a competitive barrier.

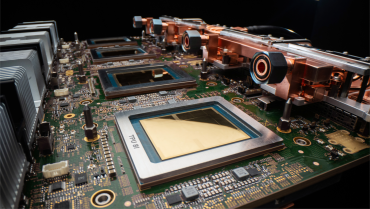

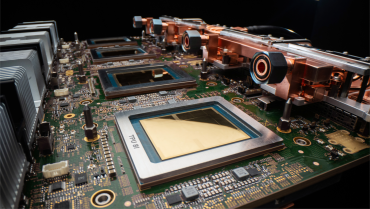

Alphabet’s Infrastructure Conviction

The financial backdrop is unambiguous. Alphabet’s capital expenditures reached $91.4 billion in 2025, nearly double the prior year. The company has guided for $175 billion to $185 billion in 2026, with spending concentrated in servers, networking, and data-centre capacity. Google Cloud revenues rose 48 percent year-on-year to $17.7 billion in the fourth quarter of 2025, pushing the annual run rate above $70 billion. The segment’s revenue backlog stood at $240 billion.

These commitments are underwritten by contracted demand. Multi-year agreements with Anthropic, covering up to one million TPUs, and infrastructure partnerships with Meta represent the kind of anchor relationships that justify the capital intensity of developing and manufacturing custom silicon at scale. Alphabet’s 2025 annual report flags the technical infrastructure, TPUs and GPUs together, as a core competitive differentiator, while candidly noting supply-chain concentration risks and the capital requirements of scaling further.

Markets have responded with measured confidence. Alphabet Inc. (NASDAQ: GOOGL) shares edged 1.5 percent higher in pre-market trading on the announcement day, consistent with a pattern in which hardware disclosures reinforce the investment thesis for Google’s full-stack AI position rather than generate speculative excitement. Separately, reported diversification talks with Marvell for memory and inference chips suggest a multi-vendor supply chain in formation, a prudent hedge against the concentration risks the company has publicly acknowledged.

The Infrastructure Layer That Decides the Margin

Challenges are real. Power availability and land constraints limit data-centre expansion timelines. Manufacturing lead times for advanced silicon remain subject to geopolitical and supply-chain uncertainty. The regulatory environment is tightening: the EU AI Act and an expanding body of U.S. state legislation are already shaping compliance costs, and any significant model behaviour or data-handling failure would invite heightened scrutiny. Software portability across the ecosystem must be maintained if TPUs are to sustain adoption beyond Google’s own workloads.

Against those headwinds, the strategic direction is coherent. For years, Google trained its models on TPUs while most of the industry relied on GPUs. It is now extending the same logic to inference and agentic deployment, the workloads that will generate the next margin pool. By splitting its accelerator line according to workload type rather than generation number, the company is signalling a more sophisticated view of AI’s infrastructure requirements: one in which hardware, models, and orchestration are co-designed for the specific demands of autonomous systems.

The eighth-generation TPUs do not simply compute faster. They embody a thesis: that the transition from AI as a tool to AI as an autonomous agent will be won or lost at the infrastructure layer, and that the organisations controlling that layer will ultimately capture the economics of what comes next.