- AI Infrastructure

- Big Tech

- Cloud Computing

Amazon Doubles Down on Anthropic With $25B Investment

11 minute read

Amazon’s expanded Anthropic commitment, now totaling over $33 billion, reshapes the economics of frontier AI and signals a new era of long-horizon infrastructure competition.

Key Takeaways

- Amazon’s phased, milestone-linked structure ties up to $25 billion in new capital to mutual commercial performance, protecting against overpayment while aligning both parties around sustained growth.

- Anthropic’s pledge to direct more than $100 billion toward AWS over a decade validates Amazon’s custom silicon strategy and positions Trainium as a credible alternative to Nvidia for large-scale model workloads.

- The deal reflects a structural shift in AI competition: predictable, long-horizon compute access has become the primary constraint on frontier model development, and hyperscalers that can supply it gain durable strategic leverage.

A Commitment Built for Scale

When Amazon disclosed a further investment of up to $25 billion in Anthropic on April 20, it did so with the quiet confidence of an institution extending a position it already knows is working. Five billion dollars transferred immediately; as much as $20 billion more structured around commercial milestones. Against that, Anthropic committed to directing more than $100 billion toward Amazon Web Services technologies over the next decade, drawing on up to five gigawatts of new capacity across current and forthcoming generations of Amazon’s custom silicon.

The figures are large even by the extravagant standards of 2026 AI spending. Yet the transaction carries a different weight than the attention-seeking capital announcements that have periodically punctuated this cycle. This is not a first bet placed in hope. Amazon has been building toward this moment since September 2023, when it made its initial foray into Anthropic with up to $4 billion. A further $4 billion followed in November 2024. The latest commitment brings total exposure to roughly $33 billion and converts what began as opportunistic stake-building into explicit, long-dated strategic interdependence.

Revenue That Validates the Infrastructure

Anthropic’s run-rate revenue has surpassed $30 billion, more than triple its level at the close of 2025. That trajectory reflects enterprise adoption of Claude across coding, reasoning, and increasingly autonomous agentic workflows, and it has created a new problem: infrastructure supply that strains at peak hours. The $100 billion compute pledge is therefore less a financial arrangement than a structural solution to the single greatest constraint on Anthropic’s ambitions.

Amazon’s own capital expenditure guidance, running at roughly $200 billion for 2026 and heavily weighted toward AWS and AI infrastructure, requires visible, long-dated demand to justify its scope. Anthropic provides exactly that. Few relationships in technology today exhibit this degree of bilateral alignment. One party needs compute at planetary scale; the other needs anchor customers with predictable, decade-long consumption. The terms here satisfy both requirements simultaneously.

Silicon Strategy, Confirmed

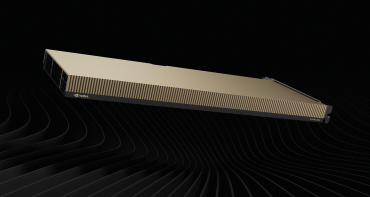

The new agreement deepens integration on three fronts. Infrastructure comes first. Anthropic will access Trainium2 already at scale, Trainium3 scaling later this year, Trainium4 in subsequent periods, and Graviton cores numbering in the tens of millions, with new inference capacity committed in Asia and Europe. Second, the full Claude platform is entering private beta within AWS, tying it more tightly to Bedrock Agents, Nova models, and the broader AWS AI stack. Third, the milestone-linked capital structure aligns incentives more precisely than a conventional equity check would allow.

The infrastructure dimension deserves particular attention. Anthropic’s decade-long commitment to run its largest models on Trainium is the most significant public validation yet of Amazon’s custom silicon thesis. For years, Nvidia’s dominance over GPU supply was treated as a near-permanent feature of the AI landscape. What has quietly shifted is the competitive axis: raw chip scarcity has given way to a more sophisticated contest over total cost of ownership, power efficiency, and supply-chain resilience. Amazon’s ability to bundle compute, storage, networking, and managed services at scale introduces structural advantages that chipmakers competing on hardware alone cannot easily replicate.

Project Rainier, now running on nearly half a million Trainium2 chips across Indiana facilities, and the more than 100,000 customers already accessing Claude through Bedrock without additional credentials or contracts, are not incidental details. They are proof points that the integration has moved well past the pilot stage.

The Economics of Lock-In, Done Right

Amazon’s equity stake in Anthropic, retained as a minority position, now sits alongside a commercial revenue stream measured in tens of billions annually. That combination of equity upside and recurring cloud consumption is structurally rare even among hyperscalers, and it is not accidental. The phased payment mechanism protects against the risk of overvaluing growth that proves more capital-intensive than projected, while the commercial milestones ensure that further disbursements track real business performance rather than market sentiment.

Anthropic’s February 2026 Series G valued the company at $380 billion post-money. Reports of richer offers circulating at the time of the latest deal suggest demand for access to Anthropic’s equity and commercial partnership has not softened. That Amazon held to a structured, milestone-contingent format under those conditions reflects deliberate capital discipline, not a lack of conviction.

For Anthropic, the arrangement solves governance as much as it solves compute. The company preserves research independence while accessing infrastructure that no independent lab could assemble on its own timeline. Claude’s iterative release cadence, delivering consistent advances in reasoning, safety, and tool use, has built enterprise trust that rivals have occasionally struggled to match. Protecting that cadence from silicon shortages is a strategic priority, not merely an operational one.

The Infrastructure Wars Enter a New Phase

The broader implication of this transaction extends well beyond the two principals. The AI industry is entering a period defined by explicit, multi-year capacity commitments rather than spot procurement or rolling contract renewals. Amazon’s arrangement with Anthropic resembles, in structural terms, the anchor-tenant logic familiar from commercial real estate and utility infrastructure: a long-term off-take commitment that de-risks the capital investment required to build supply.

Amazon’s data-center expansion across the Pacific Northwest, Ohio, Indiana, and international markets reflects hundreds of billions in forward-looking infrastructure investment. Regulators on both sides of the Atlantic are already scrutinizing cloud concentration and AI safety. Commitments of this scale will draw further examination, even if they stop well short of vertical integration.

The risks are real. Successive Trainium generations must arrive on schedule and at cost. Permitting, power procurement, and supply-chain execution carry genuine uncertainty. Anthropic must sustain Claude’s enterprise edge in a competitive field where advances can shift demand rapidly.

What the deal ultimately represents is a disciplined, well-structured wager on a thesis that is becoming harder to dispute: that in an economy where intelligence scales with compute, whoever controls the infrastructure layer holds the decisive long-term advantage. Amazon has positioned itself to be that infrastructure layer for the most demanding AI workloads in the world. The capital is deployed, the architecture is in place, and the decade-long alignment with one of the industry’s most credible frontier labs is now formalized. Execution is what remains.