- AI Chips

- GPU Market

- IPO

- Nasdaq

Cerebras Hits Nasdaq With the Largest U.S. IPO of 2026

11 minute read

The AI chipmaker’s $5.55 billion debut signals investor conviction in a wafer-scale architecture that challenges GPU orthodoxy, but execution risk and customer concentration remain live tests for public-market shareholders.

Key Takeaways

- Cerebras priced at $185 per share and opened at $350 on Nasdaq, raising $5.55 billion in the largest U.S. IPO of 2026 and pushing the fully diluted market capitalisation toward $100 billion on debut day.

- Revenue reached $510 million in 2025, up 76% year over year, underpinned by a $24.6 billion contracted backlog anchored by a landmark multi-year agreement with OpenAI for up to 750 megawatts of compute capacity through 2028.

- The WSE-3’s wafer-scale architecture offers measurable inference speed advantages over GPU-based systems, positioning Cerebras as the most credible structural challenger to Nvidia’s dominance in AI acceleration hardware.

A Different Bet on Silicon

When Cerebras Systems priced its shares at $185 on May 13, 2026, it settled a question that had circled the AI hardware industry for the better part of a decade: whether a radically unconventional approach to chip design could survive long enough to reach public markets at scale. The answer arrived with force. At 20 times oversubscribed and priced through two successive upward revisions, the offering raised $5.55 billion from 30 million shares, making it the largest U.S. IPO of the year. Shares opened at $350 on the Nasdaq, pushing the fully diluted market capitalisation toward $100 billion within hours of the first print. Underwriters hold options on an additional 4.5 million shares.

The debut was not simply a function of IPO market temperature, though conditions were favorable. It reflected something more specific: a growing recognition among institutional allocators that the GPU, for all its virtues, carries genuine architectural limitations as AI inference workloads grow larger and more demanding. Cerebras, founded in Sunnyvale in 2016 by Andrew Feldman and his co-founders, has spent nearly a decade building directly against those limitations. Its listing is the clearest signal yet that this architectural thesis has found serious believers, and that the capital markets are prepared to price that belief at a scale that would have seemed implausible even twelve months ago.

The Engineering Foundation

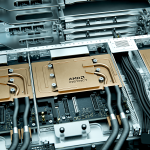

At the center of the Cerebras story is the Wafer-Scale Engine, now in its third generation. The WSE-3 is a single monolithic chip fabricated on TSMC’s 5nm process and measuring approximately 46,225 square millimeters, roughly 57 times the surface area of a leading GPU. It integrates around 4 trillion transistors, 900,000 AI-optimized cores, 44 gigabytes of on-chip SRAM, and 21 petabytes per second of memory bandwidth. The accompanying CS-3 system delivers 125 petaflops of peak AI performance per node.

The practical implication of this design is significant. Conventional GPU clusters require constant, high-bandwidth communication between chips to move data through large model layers. The WSE-3 eliminates much of that overhead by keeping activations and weights closer to compute. The result, on specific open-source model benchmarks, is inference throughput up to 15 times faster than leading GPU-based configurations, at lower energy per unit of useful output. Validations from national laboratories reinforce the claim: molecular dynamics simulations ran 179 times faster on Cerebras hardware than on the Frontier supercomputer.

This is not a theoretical advantage. It has translated into commercial contracts at a scale few private hardware companies achieve before going public.

Revenue, Backlog, and the OpenAI Agreement

Cerebras reported revenue of $510 million in 2025, a 76% increase from $290.3 million in 2024 and a more than sixfold rise from $78.7 million in 2023. Hardware sales contributed $358.4 million; cloud and services brought in $151.6 million. Gross margins held near 39%. GAAP net income reached $237.8 million, though this figure was materially shaped by one-time gains, including those from a restructuring with UAE investor G42. The non-GAAP net loss stood at $75.7 million after stock-based compensation adjustments, a more instructive baseline for evaluating underlying profitability.

The forward picture is dominated by one figure: $24.6 billion in contracted backlog as of the S-1 filing. Anchoring that number is a multi-year agreement with OpenAI for up to 750 megawatts of compute capacity through 2028, with contractual options extending toward nearly 3 gigawatts by 2030. OpenAI provided a $1 billion advance and received warrants in exchange. A separate partnership with Amazon Web Services integrates the CS-3 into Bedrock for managed inference, adding a significant distribution channel.

These are not small arrangements signed to dress a prospectus. They represent multi-year infrastructure commitments from organizations that deploy compute at a scale few other entities can match. That OpenAI chose Cerebras for a portion of its inference infrastructure is the kind of institutional endorsement that matters to prospective investors evaluating whether an alternative architecture can hold its ground.

Concentration and the Path to Diversification

The risks in the Cerebras filing are stated clearly and should be read with the same attention given to its strengths. In 2025, two customers tied primarily to UAE-based entities, including G42 and MBZUAI, accounted for approximately 86% of total revenue. U.S.-billed revenue declined year over year. For a company entering public markets with a valuation above $56 billion, that concentration represents a structural vulnerability that management will need to reduce over the coming reporting periods.

The OpenAI and AWS relationships are the most visible moves in that direction. Their inclusion in the backlog signals an intentional shift toward U.S.-based hyperscale and frontier AI demand, categories that carry both greater revenue potential and more predictable institutional approval. Whether the pipeline behind those two anchor relationships is sufficiently deep to sustain the growth trajectory implied by current valuations is the central question investors will be testing across the next several quarters.

The Competitive and Market Context

Cerebras arrives on public markets during a period of sustained capital intensity across AI infrastructure. Broader markets have rewarded chipmakers and hardware enablers, though not uniformly. Nvidia’s software ecosystem, customer relationships, and installed base remain formidable advantages that no new entrant can replicate quickly. What Cerebras offers instead is architectural differentiation in a specific and growing segment: inference for very large models where memory bandwidth and interconnect latency constrain throughput.

As frontier AI systems migrate toward mixture-of-experts architectures and trillion-parameter deployments, the cost of moving data between chips becomes an increasingly meaningful variable. Cerebras has engineered directly against that cost. The question is not whether the advantage is real. The question is whether it is defensible across multiple product generations, against a competitor with vastly greater manufacturing leverage, software depth, and balance sheet.

A Live Test of an Architectural Thesis

Trading at roughly 45 to 67 times trailing revenue depending on the metrics applied, Cerebras commands a valuation that prices in considerable execution. Thin float dynamics amplified early price indications, with shares suggested to open near or above double the IPO price. That kind of debut creates its own pressure: the company must now perform publicly at a cadence and transparency level that private capital does not demand.

For policymakers and business leaders monitoring the AI supply chain, the listing is instructive beyond its financial details. It demonstrates that institutional capital is willing to underwrite genuine architectural risk when the engineering is credible and the customer roster is serious. Cerebras built something genuinely different, found customers willing to commit billions to it, and has now accessed public markets at a scale that funds the next phase of development.

The wager on one vast, coherent silicon expanse rather than interconnected grids of smaller chips is now, plainly, a public company story. The coming quarters will determine whether it becomes a durable one.