- AI Infrastructure

- Autonomous Vehicles

- CES 2026

- Physical AI

NVIDIA’s Shift to Physical AI Signals New Infrastructure Era

12 minute read

Jensen Huang’s CES unveiling signals pivot from cloud dominance to embodied intelligence across autonomous vehicles, robotics and manufacturing infrastructure.

Key Takeaways

- Rubin delivers order-of-magnitude efficiency gains through rack-scale architecture that trains massive models with 75 percent fewer GPUs while packing 30 percent more silicon per megawatt, directly addressing electricity as the binding constraint on AI infrastructure expansion.

- Open-source Alpamayo strategy locks automotive industry into NVIDIA compute as Mercedes-Benz CLA becomes first production deployment of reasoning autonomous driving, with JLR, Lucid and Uber following, replicating the CUDA playbook that established cloud dominance.

- Physical AI pivot targets trillion-dollar markets beyond data centers as NVIDIA extends its $51 billion quarterly compute dominance into autonomous vehicles, industrial robotics and manufacturing automation where intelligence must perceive and act in real-world environments.

The Infrastructure Monopolist Moves to Ground

Jensen Huang’s annual pilgrimage to the Consumer Electronics Show has become something of a ritual in the technology calendar, but this year’s performance at the Fontainebleau carried unusual weight. The NVIDIA chief executive used the occasion to signal a fundamental reorientation: after building an empire on cloud-based artificial intelligence, the company is now staking its next decade on intelligence that operates in physical space. The announcement of the Rubin computing platform and the Alpamayo autonomous driving framework represents more than product iteration. It is a calculated pivot toward embodied AI, where the economic prize is measured not in data center contracts but in trillion-dollar markets spanning transportation, manufacturing, and robotics.

The timing reflects both maturity and ambition. NVIDIA’s most recent quarterly revenue of $57 billion, with $51.2 billion derived from data center operations alone, demonstrates extraordinary concentration in cloud infrastructure. That 66 percent year-over-year growth in data center revenue has produced margins that few industrial enterprises ever achieve. The move into physical AI extends this foundation by opening adjacencies where NVIDIA’s compute architecture can become foundational infrastructure for entirely new categories of economic activity.

Rubin’s Architectural Departure

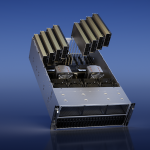

What distinguishes Rubin from prior NVIDIA platforms is the abandonment of the GPU as the primary unit of design. Instead, the company has engineered a six-component rack-scale system where the Rubin GPU, Vera CPU, NVLink 6 interconnect, ConnectX-9 network adapter, BlueField-4 data processing unit, and Spectrum-6 Ethernet switch function as a single integrated machine. The NVL72 configuration delivers 3.6 exaFLOPS of inference performance and 2.5 exaFLOPS for training workloads, but the more consequential metric is efficiency under sustained load rather than theoretical peak output.

The economic implications are substantial. Training a 10-trillion-parameter mixture-of-experts model on 100 trillion tokens now requires one-quarter the GPU count relative to the Blackwell generation, with token processing costs falling by roughly an order of magnitude. For hyperscale operators where electricity has emerged as the defining constraint on expansion, these gains translate directly to competitive advantage. The new Inference Context Memory Storage architecture alone increases token throughput fivefold while improving power efficiency by the same margin for long-context applications.

The technical achievement lies in systemic optimization rather than component-level heroics. Sixth-generation NVLink operates at 3.6 terabytes per second bidirectional bandwidth per GPU, creating what amounts to a 72-GPU logical processor with uniform memory access latency. The Vera CPU, built on 88 custom Olympus cores, functions less as a traditional processor than as a traffic orchestrator that keeps data flowing without blocking GPU execution. Direct liquid cooling with doubled flow rates and rack-level power smoothing allow operators to increase silicon density by 30 percent within the same power envelope.

This matters because the data center business is undergoing a fundamental transition. The constraint is no longer chip availability but rather the ability to supply and cool megawatt-scale installations. NVIDIA’s architecture addresses this through 800-volt DC distribution, advanced thermal management, and designs optimized for sustained utilization. In an industry where capital intensity is rising exponentially, the company that can deliver the most compute per kilowatt hour commands pricing power.

Alpamayo and the Autonomous Vehicle Opportunity

The autonomous driving announcement operates on different economic logic. Alpamayo is not a proprietary system but an open portfolio consisting of vision-language-action models, simulation frameworks, and curated training data. The flagship Alpamayo R1 model, at 10 billion parameters, ingests video streams and generates driving trajectories while producing explicit reasoning traces that explain its decisions in real time. This represents a significant advancement over the black-box neural networks that have defined autonomous systems to date.

The strategic calculation is transparent. By open-sourcing the reasoning layer, NVIDIA accelerates the entire autonomous vehicle ecosystem while ensuring that development activity remains anchored to its silicon and simulation tools. The pattern replicates the CUDA strategy that established NVIDIA’s dominance in scientific computing: provide the high-level abstractions, retain control of the accelerated computing substrate. Early production deployments in the Mercedes-Benz CLA, running on NVIDIA DRIVE Hyperion with Thor processors, validate the technical approach. Additional partnerships with Jaguar Land Rover, Lucid, and Uber suggest broader industry adoption.

The business model centers on volume manufacturing of automotive compute modules rather than software licensing. Thor-based systems deliver automotive-grade reliability while processing sensor streams in real time, requirements that create natural differentiation. As vehicles become continuous learners that improve through fleet data, the computational demands compound. NVIDIA positions itself as the supplier of the training infrastructure in the cloud, the inference hardware in the vehicle, and the simulation environments that bridge the two.

The Embodied Intelligence Thesis

What unifies the Rubin and Alpamayo announcements is a conviction that artificial intelligence is entering a phase where value creation shifts from language understanding to physical intervention. Industrial robotics, warehouse automation, and scientific simulation represent markets where AI must perceive three-dimensional environments, reason about physical constraints, and execute actions with real-world consequences. These applications demand different architectural priorities: latency sensitivity, power efficiency, and the ability to process multimodal sensor data while maintaining deterministic behavior for safety-critical functions.

NVIDIA’s answer is a vertically integrated stack spanning silicon, system architecture, simulation tools, and foundation models. The Cosmos world models generate synthetic training data for autonomous systems. Isaac Sim and Isaac Lab allow humanoid robots to learn in simulation before physical deployment. An expanded partnership with Siemens will integrate Omniverse with industrial design workflows, turning manufacturing facilities into continuously optimizing systems. The breadth reflects systematic preparation for a market transition that NVIDIA has been positioning toward for several years.

Platform Economics at Scale

The architecture creates natural network effects. As more developers build on Alpamayo and train in Isaac Sim, the value of NVIDIA’s simulation and compute infrastructure increases. Each production deployment generates data that improves foundation models, which in turn accelerates adoption. The open-source releases ensure that application-layer innovation remains broadly accessible while the underlying compute substrate remains proprietary. This balance has proven effective in cloud AI, where NVIDIA maintained dominant share even as model architectures proliferated.

For automotive partners, the proposition is compelling. Mercedes-Benz achieves EuroNCAP five-star ratings with technology that will roll out in U.S. markets throughout 2026. Other manufacturers gain access to reasoning capabilities that would require years of independent development, along with simulation tools that compress validation cycles. The trade is clear: automotive manufacturers focus on vehicle integration and brand experience while NVIDIA provides the intelligence substrate.

The industrial robotics opportunity follows similar logic. Humanoid robots from Figure, Tesla, and others require the same capabilities that autonomous vehicles demand: real-time perception, physical reasoning, and continuous learning. NVIDIA’s simulation environments allow these systems to accumulate thousands of hours of training before physical deployment, dramatically reducing development costs and time to market. The Isaac platform becomes infrastructure for an emerging industry.

Capital Intensity as Competitive Moat

What the Las Vegas presentation made explicit is that NVIDIA’s competitive position rests increasingly on its ability to integrate complex systems at scale. The annual architecture cadence, with Rubin Ultra already anticipated for late 2027, requires orchestrating semiconductor fabrication, advanced packaging, thermal engineering, and software optimization across a supply chain that spans continents. Few organizations possess the capital, expertise, and customer relationships to execute at this level.

The move to rack-scale design raises barriers further. Customers no longer purchase components but complete systems that arrive tested and ready for deployment. This shifts the value proposition from silicon performance to total cost of ownership, where NVIDIA’s advantages in power efficiency and sustained utilization become decisive. Hyperscalers can optimize at the data center level, but NVIDIA optimizes at the rack level first, then provides tools for facility-scale orchestration.

The electricity constraint, rather than limiting growth, may actually strengthen NVIDIA’s position. As power becomes scarce relative to demand, operators prioritize efficiency over peak performance. Rubin’s ability to deliver 30 percent more compute within the same power envelope translates directly to capacity expansion without new electrical infrastructure. In markets where utility capacity takes years to provision, this advantage compounds.

The Scaling Thesis Extended

Huang’s presentation advanced a thesis about the next phase of economic organization: that compute is becoming the primary input for value creation across an expanding range of activities. Cloud AI established this pattern for digital services. Physical AI extends it to manufacturing, transportation, and automation. NVIDIA positions itself as the essential supplier for that transition.

The financial performance supports the narrative. Guidance of $65 billion for the current quarter reflects demand that continues to exceed supply across most product categories. Data center growth at 66 percent year-over-year occurs against a base that already dwarfs most technology companies’ total revenue. The automotive and industrial opportunities represent adjacencies that could, over time, reach similar scale.

What emerged from the Fontainebleau was a company that has successfully navigated the transition from niche accelerator provider to primary infrastructure supplier for the AI era. The Rubin and Alpamayo platforms extend that position into physical domains where the addressable market expands by an order of magnitude. The execution requirements are formidable, but NVIDIA has demonstrated the organizational capability to deliver on annual architecture cycles while maintaining technological leadership.

Infrastructure as Expansion

The strategic coherence is notable. Open-source the application layer to accelerate ecosystem development. Retain ownership of the compute substrate where differentiation is sustainable. Provide simulation tools that lock in the development workflow. Integrate vertically to capture value at the system level rather than the component level. This approach transformed NVIDIA from a GPU company into a platform company in cloud AI. The same methodology now applies to autonomous systems and industrial automation.

The implications extend beyond NVIDIA’s immediate business. If embodied intelligence follows the trajectory that Huang outlined, the infrastructure requirements will be measured in exaFLOPS deployed not in dozens of data centers but in millions of vehicles, robots, and factories. The company that supplies that infrastructure occupies a position analogous to what Intel held in the PC era or what TSMC holds in smartphone manufacturing: essential, difficult to displace, and economically significant.

Whether the vision materializes in full remains to be determined by technological progress and market adoption rates that no single company controls. What is now clear is the direction and the commitment. NVIDIA has placed a substantial portion of its organizational capacity behind the thesis that intelligence, increasingly, means the ability to perceive and act in physical space. The Las Vegas presentation was less a product launch than a declaration of strategic intent, delivered with the confidence of a company that has correctly anticipated major platform transitions before.