- AI Infrastructure

- Physical AI

- Semiconductors

Jensen Huang Signals the Next Phase of AI at Nvidia GTC 2026

12 minute read

At GTC 2026, Jensen Huang reframes the AI conversation: the training boom is giving way to an age of agentic systems, physical intelligence, and industrial-scale deployment.

Key Takeaways

- Nvidia’s Vera Rubin platform and purpose-built Vera CPU signal a fundamental shift from discrete GPU sales toward vertically integrated AI factory systems optimized for every phase of the intelligence lifecycle, from pre-training through autonomous inference.

- With $1 trillion in projected GPU-related demand visible through 2027, Nvidia’s growth thesis has migrated from training-scale infrastructure to inference-scale and physical AI, a transition that implies sustained multi-year capital expenditure cycles across hyperscalers, sovereign clouds, and industrial enterprises.

- The company’s open-sourced agentic frameworks, robotics ecosystem partnerships, and space-optimized compute platforms reveal a deliberate strategy to commoditize the entire AI operating environment, positioning Nvidia as the foundational layer beneath an economy increasingly run by autonomous systems.

The Recalibration in San Jose

Three weeks before Jensen Huang took the stage at the SAP Center in San Jose, Nvidia filed the kind of financial results that ordinarily mark a ceiling rather than a starting point. Quarterly revenue of $68.1 billion, up 73 percent year-over-year. Full-year revenue of $215.9 billion, a 65 percent increase. The data-center segment alone, at $62.3 billion for the quarter, now rivals the entire annual revenue of companies considered titans in their own industries.

And yet Huang’s keynote at GTC 2026, delivered to more than 30,000 attendees from 190 countries, was not a victory lap. It was something more strategically interesting: a deliberate repositioning of the company’s narrative at the precise moment when raw scale might otherwise become the story’s entire arc. The training gold rush, Huang signaled, has served its purpose. What follows is harder to build, more deeply embedded in the operations of the global economy, and considerably more durable as a business.

“I believe computing demand has increased by 1 million times over the last few years,” he told the audience. The $1 trillion GPU-related demand figure he now projects through 2027, double the $500 billion estimate he offered the year prior, was presented not as a revenue forecast but as a visibility metric: a reading of orders already in motion. For investors accustomed to parsing guidance, the distinction matters. Huang was describing committed infrastructure, not aspirational market share.

What the Vera Rubin Platform Actually Represents

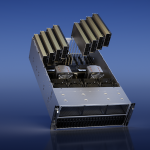

The hardware centerpiece of GTC 2026, the Vera Rubin platform, is best understood not as a product launch but as a statement of architectural intent. Seven new chips and associated rack systems, including Vera Rubin NVL72 GPU systems, Vera CPU racks, Groq 3 LPX inference accelerators, and BlueField-4 STX storage, form a configurable stack designed to cover every phase of the AI lifecycle: pre-training, post-training, test-time scaling, and agentic inference.

The vocabulary here is precise and deliberate. Previous generations of Nvidia announcements spoke primarily to training workloads, where the competitive logic was straightforward: more GPU clusters meant faster model development. The Vera Rubin architecture speaks instead to the operational layer, to the systems that will run inference at industrial scale, route decisions through autonomous agents, and process the sensory data of physical machines in real time. This is a fundamentally different engineering problem, and the platform is built accordingly.

The Vera CPU is the clearest expression of this shift. Described by Nvidia as the world’s first processor purpose-built for agentic AI and reinforcement learning, it addresses a bottleneck that has become progressively more acute as models move from training environments into deployment. Early customer data cited at the announcement indicated twice the efficiency and 50 percent faster performance than conventional CPUs for data processing, training, and large-scale inference. Alibaba, ByteDance, Meta, Oracle Cloud Infrastructure, CoreWeave, and several emerging cloud providers have already committed to deployments. The processor is not positioned as a general-purpose replacement for existing silicon but as a specialized orchestration layer, one that frees GPUs for the most compute-intensive operations while handling the memory-bound, coordination-heavy tasks that agentic systems generate in volume.

Taken together with the Vera Rubin DSX reference architecture and the Omniverse DSX digital-twin blueprint released at the conference, the platform constitutes something closer to a turnkey factory specification than a component catalogue. Nvidia is no longer selling accelerators into a customer’s existing stack. It is offering the stack itself.

Physical AI as Industrial Doctrine

If the hardware announcements addressed the infrastructure question, the conference’s second major theme carried the more ambitious claim: that intelligence is becoming embodied, and that the economic consequences will be felt well beyond the technology sector.

Nvidia’s Open Physical AI Data Factory Blueprint, an open reference architecture automating the entire data pipeline for robotics, vision agents, and autonomous vehicles, synthesizes curation, synthetic data augmentation, evaluation, and orchestration into a single deployable framework. Cloud partners Microsoft Azure and Nebius have already integrated it into their offerings. Availability on GitHub is scheduled for April. Rev Lebaredian, Nvidia’s vice president of Omniverse and simulation technologies, framed the underlying logic with precision: “Physical AI is the next frontier of the AI revolution, where success depends on the ability to generate massive amounts of data. In this new era, compute is data.”

The robotics partnerships announced at GTC give that claim industrial substance. ABB, FANUC, KUKA, and YASKAWA on the manufacturing side. Figure, Agility, and AGIBOT among humanoid developers. CMR Surgical and Medtronic in surgical systems. The breadth is not coincidental. Huang’s statement that “every industrial company will become a robotics company” is the kind of assertion that sounds categorical until one examines the deployment evidence: Skild AI operating on Foxconn’s Blackwell production lines in high-precision assembly, Disney’s Olaf robot scheduled for a public debut at Disneyland Paris, updated Isaac simulation frameworks and GR00T N foundation models extending the reach of autonomous systems into environments that were, until recently, considered too unstructured for reliable machine operation.

What unites these announcements is a coherent theory about where value accumulates in the physical AI era. Data generation and simulation are not supplementary to the intelligence pipeline; they are the pipeline. Companies that control the synthetic data infrastructure will exercise significant leverage over the quality and safety of physical AI systems across every vertical. Nvidia, by open-sourcing the blueprint while retaining the underlying compute dependency, is playing a long and structurally advantageous hand.

The Agentic Layer and the Software Transition

Alongside the hardware and robotics announcements, Nvidia introduced the Agent Toolkit, built around the open-source OpenShell runtime developed in partnership with LangChain. The toolkit equips enterprises to build what Nvidia describes as self-evolving, safety-first agents. An AI-Q Blueprint for agentic search, also open-sourced, topped independent accuracy benchmarks while cutting query costs in half through hybrid orchestration of frontier and open models.

These are not productivity tools in the conventional sense. They represent Nvidia’s entry into the layer of the stack that sits above silicon and below application software, the orchestration and reasoning infrastructure that will increasingly determine how enterprises consume compute. The strategic parallel to the platform’s hardware positioning is direct: just as Vera Rubin offers an integrated factory specification rather than discrete components, the agentic software layer offers an integrated operational environment rather than isolated models.

The extension of this logic into space deserves note. Space-optimized platforms, Vera Rubin modules, IGX Thor, and Jetson Orin variants, engineered for orbital AI compute and autonomous satellite operations, with early adopters including Aetherflux, Axiom Space, Kepler Communications, and Planet Labs, indicate that the company’s definition of addressable infrastructure now spans terrestrial, edge, and orbital environments. The T-Mobile and Nokia AI-RAN integration, with RTX PRO 6000 Blackwell Server Edition GPUs running physical AI applications at the wireless edge and the City of San Jose among initial municipal evaluators, reinforces the same point from a different direction.

The Architecture of a Decade

GTC 2026 will be read, with some distance, as the conference at which Nvidia articulated the full scope of its ambition with unusual clarity. The company that supplied accelerators for the initial wave of large-model training has assembled a coherent architecture spanning foundation hardware, simulation and data infrastructure, agentic software frameworks, and embedded robotics, intended to function as the operating environment for an economy increasingly organized around autonomous systems.

For senior investors, the signal is that the company’s growth thesis has a second and more operationally complex chapter, one characterized less by the explosive unit economics of training buildouts and more by the durable revenue structures of infrastructure standardization. For policymakers, the implication is that AI infrastructure has moved from strategic option to competitive prerequisite across industrial, sovereign, and defense contexts. For business leaders, the practical question is no longer whether to engage with agentic and physical AI systems, but on whose terms and at what pace.

Nvidia’s answer to that question, laid out across seven hours of announcements in San Jose, is that the terms are increasingly its own. The factory blueprints have been drawn. Construction has already begun.