- AI Infrastructure

- Data Centers

- Semiconductors

NVIDIA and Marvell Forge a $2 Billion AI Infrastructure Alliance

12 minute read

As inference demand surges and AI clusters grow, NVIDIA and Marvell are building the connective architecture that next-generation AI factories will run on.

Key Takeaways

- NVIDIA’s $2 billion investment in Marvell anchors a partnership built around NVLink Fusion, enabling custom accelerators to operate within NVIDIA’s dominant AI software and hardware ecosystem.

- Collaboration on silicon photonics and optical interconnects addresses one of the most consequential engineering constraints facing hyperscale AI clusters: the physical and power limits of electrical cabling at massive scale.

- The deal reflects a broader industry inflection, where hyperscalers seek specialised compute without ecosystem fragmentation, and NVIDIA positions itself as the connective tissue of heterogeneous AI infrastructure.

The Architecture of Ambition

There is a moment in any technology cycle when the dominant platform stops competing on raw performance and begins competing on architecture. NVIDIA reached that moment quietly, and the partnership announced with Marvell Technology on March 31 makes the strategy explicit. The two companies have agreed to deepen their collaboration around NVIDIA’s NVLink Fusion platform, with NVIDIA committing $2 billion in a direct investment in Marvell. The scope extends well beyond a component agreement: it encompasses custom silicon integration, silicon photonics, advanced optical interconnects, and the transformation of telecommunications infrastructure into AI-native networks. Taken together, it is one of the more consequential industrial alignments of the current AI buildout.

The financial backdrop sharpens the picture. NVIDIA reported fiscal 2026 revenues of $215.9 billion, up 65 percent year-on-year, almost entirely driven by data-centre demand. Marvell, a company often underestimated outside specialist circles, posted record revenues of $8.195 billion in the same period, growing 42 percent, with its data-centre segment reaching roughly $6.1 billion, or approximately 75 percent of total sales. These are not the numbers of a peripheral supplier. Marvell has become a central node in the infrastructure that powers the AI era, and NVIDIA’s investment is an acknowledgment of precisely that.

What NVLink Fusion Actually Does

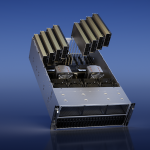

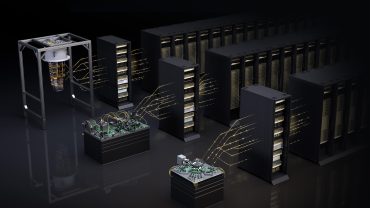

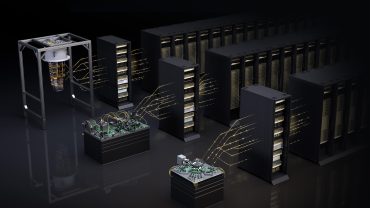

To understand what is at stake, it is worth being precise about the technology. NVLink Fusion, introduced at COMPUTEX in May 2025, is a rack-scale interconnect architecture designed to allow custom accelerators and processors, built by third parties, to connect with NVIDIA’s GPU compute, networking, and software layers without requiring a full architectural divorce from the NVIDIA ecosystem. In practical terms, it gives hyperscalers and ASIC designers a path to specialisation without fragmentation.

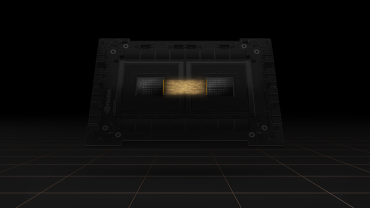

The significance of this is structural. Cloud operators have spent hundreds of billions of dollars building out data-centre capacity, and the economics of that investment are now being scrutinised with increasing rigour. Pure reliance on merchant GPUs carries real constraints: power consumption per workload, total cost of ownership at scale, and the efficiency gaps that emerge when general-purpose hardware handles highly specific inference tasks. Custom silicon, typically cited as offering performance-per-watt improvements of 30 to 50 percent on targeted tasks, addresses those gaps directly. The problem, historically, has been that custom chips require custom software stacks, custom interconnects, and custom supply chains. Every layer of customisation is a layer of risk.

NVLink Fusion dissolves a significant portion of that risk. By maintaining a coherent, high-bandwidth, low-latency fabric across heterogeneous nodes, it keeps custom XPUs inside the NVIDIA software orbit. CUDA remains the lingua franca. The orchestration layer stays intact. Operators gain the efficiency of specialised silicon without abandoning the programmability and ecosystem depth that NVIDIA has spent two decades building. Marvell, which brings established expertise in high-speed SerDes, optical digital signal processors, and custom ASICs, is now a formal and privileged participant in that architecture.

The Photonics Dimension

If NVLink Fusion is the strategic framework, the silicon photonics collaboration is the engineering wager that may prove most consequential over time. As AI clusters scale toward hundreds of thousands of accelerators, the interconnect problem becomes acute. Electrical signalling encounters hard physical limits: distance, heat, and power density all degrade performance in ways that rack-scale and row-scale designs cannot easily absorb. Optical solutions promise to extend reach, reduce energy consumption, and support the density requirements of next-generation AI factories in ways that copper simply cannot match.

Marvell holds a strong position in optical components, including coherent optics and photonic engines, and the agreement positions the two companies to develop optical interconnect solutions tailored specifically for AI workloads. This is not an incremental improvement on existing infrastructure. It is a foundational capability for building the clusters that will run trillion-parameter models and agentic inference workloads at commercial scale. The engineering challenges are genuine: yielding photonic components at hyperscale volumes while meeting stringent power and latency budgets requires sustained investment and close integration between chip design and systems architecture. The partnership provides both.

AI-RAN and the Broader Scope

The third pillar of the collaboration, AI-RAN, extends the logic into telecommunications. NVIDIA’s Aerial platform already provides GPU-accelerated signal processing for 5G networks, and the joint work with Marvell targets the transformation of radio access networks into AI-native infrastructure capable of supporting both 5G deployments and the eventual transition to 6G. This is a longer-duration opportunity than the immediate data-centre buildout, but it reflects a consistent strategic instinct: wherever compute-intensive workloads are moving, NVIDIA intends to be the infrastructure layer.

For Marvell, which has deep roots in networking and connectivity silicon, the AI-RAN dimension is a natural extension of its existing business rather than a speculative pivot. The combination of Marvell’s high-performance analogue and connectivity expertise with NVIDIA’s accelerated computing platform creates a credible offering for telecom operators looking to modernise network infrastructure without a wholesale architectural reinvention.

The Competitive Context

The deal arrives against a backdrop of intensifying competition in AI silicon. Amazon, Google, Microsoft, and Meta have all invested heavily in proprietary accelerators, each pursuing workload-specific efficiency gains that reduce dependence on third-party hardware. Google’s TPUs, Amazon’s Trainium and Inferentia, Microsoft’s Maia, and Meta’s MTIA represent serious engineering commitments, not temporary experiments. The hyperscalers are genuinely building alternatives.

Yet the NVIDIA-Marvell partnership reflects a more nuanced reading of that competitive dynamic. Cloud operators do not necessarily want to exit the NVIDIA ecosystem entirely. The software investment required to migrate workloads away from CUDA-based infrastructure is substantial, and the performance density of NVIDIA’s latest GPU architectures remains a benchmark that custom silicon has not universally matched across all workload types. What operators want is optionality: the ability to deploy specialised compute where it is most efficient while retaining access to general-purpose GPU capacity and the deep software tooling that NVIDIA has built around it.

NVLink Fusion is NVIDIA’s answer to that demand. By opening the platform to semi-custom integration, the company acknowledges that the era of pure GPU monoculture in data centres is evolving, while positioning itself as the architecture around which heterogeneous compute will organise. It is a posture that historical parallels support. Ethernet standardised disaggregated data-centre networking without eliminating the value of optimised components above it. x86 accommodated specialised accelerators without ceding control of the general-purpose computing layer. The architectures that won those transitions were the ones that provided a stable, programmable foundation for innovation rather than resisting it.

What the Market Understood

Marvell Technology, Inc. (NASDAQ: MRVL) shares rose approximately 11 to 12 percent in early trading following the announcement, a reaction that reflects more than short-term enthusiasm for an equity infusion. Investors understood the structural implication: Marvell is no longer simply a connectivity and storage silicon supplier orbiting the AI data-centre economy. It is now formally integrated into the architectural layer that NVIDIA is building for the next generation of AI infrastructure, with a financial stake from the most consequential company in the sector to match.

NVIDIA’s own shares (NASDAQ: NVDA) moved modestly, which is itself informative. For a company generating $215.9 billion in annual revenue, a $2 billion investment registers as a targeted commitment rather than a material financial event. The signal it sends is strategic rather than economic: this is the connectivity and optics partner NVIDIA has chosen to build the AI factory of the next decade alongside.

The execution risks are real and should not be minimised. Joint silicon and software integration at this complexity requires sustained engineering coordination. Photonic component yields at volume remain a challenging manufacturing problem. Software portability across heterogeneous nodes demands continuous investment in tooling and abstraction layers. But the direction is clear, the mutual incentives are well-aligned, and the financial commitment is serious.

What NVIDIA and Marvell have announced is not simply a partnership. It is a statement about what the infrastructure layer of artificial intelligence will look like as it matures: heterogeneous in compute, optical in connectivity, and architecturally coherent through the software and interconnect layers that NVIDIA controls. That is a significant bet, and on March 31, the market decided it was a sensible one.