- AI Infrastructure

- Data Centers

- TPUs

Meta Weighs Google TPUs as AI Infrastructure Pressures Intensify

10 minute read

Meta explores adopting Google TPUs to reduce GPU dependency as soaring AI compute needs reshape hardware economics and intensify competition with Nvidia.

Key Takeaways

- Meta’s $70-72 billion 2025 capex, up 78% from 2024, exposes Nvidia reliance; Google TPU talks aim to ease supply issues and lock-in, potentially altering billions in procurement by 2027.

- TPUs provide 30-40% lower power use for Meta’s inference tasks on platforms like Instagram, but CUDA-to-TPU shift requires years of engineering, leading to hybrid models for balanced operations and independence.

- Negotiations indicate AI hardware fragmentation, eroding Nvidia’s 80-95% share and promoting pricing competition, while supporting CHIPS Act goals and OECD national strategies, advancing hyperscalers’ tech sovereignty in a trillion-dollar market.

The Hardware Dependency Problem

Meta Platforms has committed between $70 billion and $72 billion in capital expenditures for 2025, representing a 79% increase over the prior year’s $39.2 billion total. The overwhelming majority flows into servers, data centers, and network infrastructure designed to power artificial intelligence workloads. Yet beneath these extraordinary numbers lies a strategic vulnerability that has grown increasingly acute: the company’s profound reliance on Nvidia’s graphics processing units to run its AI operations.

Discussions now underway between Meta and Alphabet’s Google to integrate tensor processing units into Meta’s data centers represent far more than a routine vendor evaluation. These negotiations, first reported on November 25, 2025, involve potential commitments worth billions of dollars and deployment timelines extending to 2027. While neither company has formally acknowledged the talks, the implications proved substantial enough to move markets immediately. Nvidia’s shares declined 2.5% on the news, while Alphabet’s rose by a similar margin.

The development marks a potential inflection point in the AI hardware market, where a handful of hyperscale technology companies are spending hundreds of billions of dollars on computational infrastructure, and where Nvidia has maintained an estimated 80% to 95% market share in specialized AI accelerators. For Meta, the stakes extend beyond cost optimization. The company’s entire product evolution now depends on reliable access to massive amounts of processing power, from training its Llama language models to running real-time inference on billions of daily social media interactions.

Third-quarter results showed capital expenditures of $19.4 billion for the period alone, while advertising revenue reached $50.8 billion, up 26% year-over-year. This financial performance provides the cash flow to sustain an aggressive investment trajectory, yet the capacity to spend does not eliminate the strategic risk of depending on a single supplier for mission-critical infrastructure.

Scale and Strategic Exposure

The computational demands of modern machine learning have reached extraordinary levels. Training the Llama 3 series, released in April 2024, required clusters of approximately 16,000 Nvidia H100 GPUs. More recent iterations have pushed those requirements even higher. Meanwhile, the company has embedded AI features across its product portfolio, from content recommendations in Instagram Reels to multimodal capabilities in Ray-Ban smart glasses launched on September 30, 2025. These deployments demand not just training infrastructure but enormous inference capacity to serve billions of users in real time.

This operational reality has created profound dependencies. CEO Mark Zuckerberg acknowledged chip shortages directly in early 2024 earnings calls, noting bottlenecks that constrain AI scaling ambitions. Nvidia’s dominance stems from both technological leadership and ecosystem lock-in. The company’s CUDA software framework has become the de facto standard for AI development, creating substantial switching costs for customers whose entire technical infrastructure has been optimized around Nvidia’s architecture.

The financial implications are significant. Nvidia reported data center revenue of $26.3 billion in its fiscal 2025 results, much of it driven by hyperscalers like Meta. Supply constraints in the market have extended lead times for advanced AI chips to six months or more, driving up costs and limiting deployment flexibility. This scarcity has elevated expenses precisely when computational requirements continue their exponential growth, prompting Meta to pursue alternatives with increasing urgency.

Internal Development Initiatives

Meta has pursued multiple paths to reduce this dependency. The company’s most significant internal effort is the Meta Training and Inference Accelerator, a custom silicon project that debuted in April 2024. This proprietary chip, designed specifically for efficiency in ranking and recommendation tasks, promises advantages in power consumption compared to general-purpose GPUs. By March 2025, the company had deployed a second-generation version, according to regulatory filings that reference ongoing investments in custom hardware to reduce reliance on third-party providers.

In September 2025, Meta acquired Rivos, a RISC-V chip startup, to bolster its custom AI hardware capabilities. The transaction, part of the company’s broader $72 billion capital expenditure envelope, signals commitment to controlling more of its hardware stack. These efforts follow a broader industry pattern. Amazon Web Services developed its own Trainium chips for machine learning. Microsoft has invested in custom silicon partnerships through its Maia initiative. Google itself pioneered this approach with TPUs, which it began developing in 2015.

Yet internal chip development requires years of iteration and carries substantial execution risk. Custom silicon must compete not just on raw performance but on the entire software ecosystem that surrounds it. Nvidia’s advantage lies not only in chip design but in a decade of software tools, libraries, and developer expertise built around CUDA. Replicating that ecosystem represents a formidable challenge, one that even companies with Meta’s resources struggle to overcome quickly. This reality necessitates hybrid strategies that incorporate proven alternatives alongside internal development efforts.

The TPU Alternative

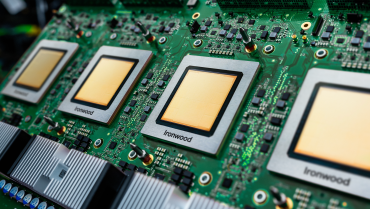

Google’s tensor processing units offer a different proposition. As application-specific integrated circuits designed expressly for the matrix operations central to neural networks, TPUs have powered Google’s own AI infrastructure for nearly a decade. The company offered them through its cloud platform starting in 2018, but recent moves suggest an expansion into direct sales for on-premises deployment in customer data centers.

The reported negotiations involve Meta potentially deploying TPUs worth billions of dollars starting in 2027, alongside shorter-term arrangements to rent TPU capacity through Google Cloud. Sources familiar with the discussions indicate the deal could represent one of the largest AI hardware transactions in the industry’s history, with volumes that would rival Google’s existing manufacturing arrangements with Broadcom.

For Meta, the appeal extends beyond diversification. TPUs reportedly consume 30% to 40% less power per operation than comparable GPUs, a critical consideration when energy costs represent 20% to 30% of data center operating expenses. In inference workloads, where trained models process incoming requests at scale, efficiency gains translate directly to reduced costs. Given Meta’s product portfolio generates billions of inference requests daily, particularly for content algorithms powering Instagram and Facebook feeds, even modest efficiency improvements compound rapidly.

Google has emphasized these advantages in recent technical documentation. A November 2025 blog post highlighted its JAX AI Stack, a software framework co-designed with TPUs for machine learning production. The company’s cloud revenue grew 34% year-over-year in the third quarter to reach $15.2 billion, driven substantially by AI infrastructure services. Landing Meta as a major TPU customer would validate the technology’s competitiveness against Nvidia while establishing a beachhead in the on-premises market that extends beyond cloud rental arrangements.

Market Structure and Competitive Dynamics

The broader context makes this potential partnership particularly significant. Nvidia’s dominance has created a supply-demand imbalance where scarcity drives pricing power and limits customer flexibility. Creating viable alternatives serves both to provide immediate relief and to introduce competitive pressure that could moderate pricing over time.

The market has already begun to fragment. AMD has gained modest share with its Instinct accelerators. Startups like Cerebras and Graphcore have raised substantial venture capital to pursue specialized approaches. Yet none have achieved the scale or ecosystem maturity to pose a systemic challenge to Nvidia’s position. Google’s TPUs, backed by a decade of internal deployment and refinement plus Alphabet’s vast resources, represent the most credible alternative currently available.

Regulatory considerations also factor into the equation. The CHIPS Act has allocated $39 billion in incentives to bolster domestic semiconductor manufacturing, with an emphasis on advanced nodes critical for AI applications. Meta’s U.S.-focused data center expansion aligns with these policy priorities. Diversifying hardware suppliers, particularly toward U.S.-based alternatives, may ease regulatory scrutiny as policymakers increasingly view AI infrastructure through a national security lens. OECD analyses have highlighted how AI infrastructure investments underpin national competitiveness, elevating hardware decisions beyond commercial considerations into geopolitical imperatives.

For Nvidia, the challenge extends beyond a single customer relationship. Eroding exclusivity among hyperscalers could compress margins and weaken the network effects that have sustained its market position. The company faces additional scrutiny through antitrust probes examining its market dominance. Yet Nvidia has responded with continued innovation, including its Blackwell chip architecture, betting that technological leadership and ecosystem loyalty will sustain its competitive position even as alternatives gain traction.

Implementation Challenges and Timeline Realities

The path from negotiation to deployment involves substantial technical complexity. Meta’s software infrastructure has been optimized for Nvidia’s architecture over years of development. Transitioning workloads to TPUs requires significant engineering effort to adapt training pipelines, inference systems, and operational tooling through Google’s JAX framework. This represents a multi-year undertaking even with substantial resources dedicated to the effort.

The reported 2027 timeline for large-scale TPU deployment reflects these realities. Initial arrangements likely involve more limited cloud-based pilots to validate performance and develop operational expertise before committing to on-premises installations at scale. These pilots would benchmark TPU performance against existing infrastructure, identify optimal workload allocations, and build internal expertise on unfamiliar architecture.

Even with successful pilots, wholesale replacement of Nvidia hardware appears neither feasible nor desirable. Instead, Meta would likely pursue a hybrid approach, allocating workloads based on efficiency and cost considerations. Training intensive workloads might remain on GPUs where CUDA optimization provides advantages, while inference tasks migrate to TPUs where energy efficiency offers compelling economics. This model balances immediate operational needs with long-term strategic optionality, avoiding over-dependence on any single supplier.

Strategic Implications

This potential partnership represents a structural evolution in the AI infrastructure market rather than a discrete procurement decision. As computational requirements continue their exponential growth, no single supplier can fully satisfy demand from the handful of hyperscalers driving the industry. Meta has indicated that capital expenditures for 2026 will grow notably from already elevated 2025 levels, suggesting the spending trajectory remains steeply upward with no near-term plateau in sight.

The negotiation also reflects maturing attitudes toward technology sovereignty. Just as hyperscalers have increasingly developed custom networking hardware and storage systems, controlling more of the AI compute stack has become a strategic imperative. This shift favors companies capable of sustaining long-term semiconductor development programs, a category that excludes most software-focused competitors but includes Alphabet, Amazon, and Microsoft alongside Meta.

The concentration of AI infrastructure spending among a small number of hyperscalers amplifies the significance of each decision. These companies collectively represent the bulk of demand for advanced AI accelerators, meaning their procurement choices directly shape market dynamics, pricing structures, and innovation incentives across the semiconductor industry. A shift by Meta toward TPUs would signal to other hyperscalers that credible alternatives exist, potentially triggering similar evaluations and further fragmenting what has been a remarkably concentrated market.

For Google, success in this negotiation would validate years of TPU development and manufacturing investment while opening a revenue stream that extends beyond cloud services into direct hardware sales. For Nvidia, the outcome represents a test of whether technological leadership and ecosystem advantages can sustain near-monopoly market share in the face of determined efforts by both customers and competitors to create alternatives.

The outcome of Meta’s discussions with Google will reverberate across the industry regardless of whether a formal agreement materializes. The negotiations themselves demonstrate that alternatives to Nvidia’s dominance are gaining credibility at the highest levels of decision-making, which alone may influence pricing, allocation priorities, and partnership terms throughout the market. In an industry where infrastructure decisions shape competitive trajectories for years and capital commitments run into the tens of billions, such shifts carry consequences far beyond any individual transaction. The question is no longer whether the AI hardware market will diversify, but rather how quickly and completely that transformation will unfold.